Welcome, fellow developers, to an exhilarating journey into the future of web graphics! Today, we’re diving deep into WebGPU JavaScript fundamentals, unlocking the true power of your GPU directly from the browser. Forget old API limitations; WebGPU offers unprecedented performance and control. Are you ready to render amazing things? Let’s get started!

What We Are Building

What excites us about WebGPU? Imagine crafting a dynamic, interactive visualization directly in your browser. For this tutorial, we’ll render a simple, animated 2D shape – a colorful rectangle that subtly changes over time. This foundational example will demystify core WebGPU concepts, preparing you for more complex projects. Think of it as your first step towards stunning visual dashboards, immersive games, or even real-time data analysis tools right on the web.

WebGPU is trending because it’s the modern successor to WebGL, offering a much lower-level API. This aligns with native graphics APIs like Vulkan, Metal, and DirectX 12, meaning significantly better performance, more precise control, and access to advanced GPU features, including compute shaders. The web is evolving, and with WebGPU, we can bring desktop-class graphics experiences to our users without plugins.

“WebGPU provides web developers with unprecedented control over modern GPU hardware, opening doors to truly innovative and high-performance browser-based applications.”

You can apply these skills across various domains, from interactive experiences to scientific simulations. It’s an exciting time to be a web developer, pushing boundaries and innovating!

HTML Structure

Our HTML setup will be minimal, providing just a canvas element to serve as our rendering surface. We’ll also include a small container for some UI, perhaps a simple button to toggle animation. Remember, a clean HTML structure sets the stage for powerful scripting.

<!DOCTYPE html>

<html lang="en">

<head>

<meta charset="UTF-8">

<meta name="viewport" content="width=device-width, initial-scale=1.0">

<title>WebGPU JavaScript: Fundamentals Explained</title>

<link rel="stylesheet" href="style.css">

</head>

<body>

<div class="container">

<h1>Exploring WebGPU Magic</h1>

<canvas id="webgpu-canvas" width="800" height="600"></canvas>

<p>Watch the GPU in action!</p>

</div>

<script src="main.js"></script>

</body>

</html>

CSS Styling

A dash of CSS makes our canvas look presentable, centering it on the page and providing some basic visual feedback. This helps to integrate our powerful GPU rendering into a user-friendly layout. We’ll keep it simple, focusing on foundational presentation.

body {

margin: 0;

font-family: Arial, sans-serif;

display: flex;

justify-content: center;

align-items: center;

min-height: 100vh;

background-color: #1a1a2e;

color: #e0e0e0;

}

.container {

text-align: center;

padding: 20px;

background-color: #0f3460;

border-radius: 8px;

box-shadow: 0 4px 15px rgba(0, 0, 0, 0.4);

}

canvas {

border: 2px solid #e94560;

display: block;

margin: 20px auto;

max-width: 100%;

height: auto;

}

WebGPU JavaScript: A Step-by-Step Breakdown

Now for the exciting part – diving into the WebGPU JavaScript code! This is where we bring our canvas to life, leveraging the raw power of the GPU. We’ll walk through essential steps: initializing WebGPU, preparing data, and finally rendering a basic shape. Each step guides you from an empty canvas to a vibrant display.

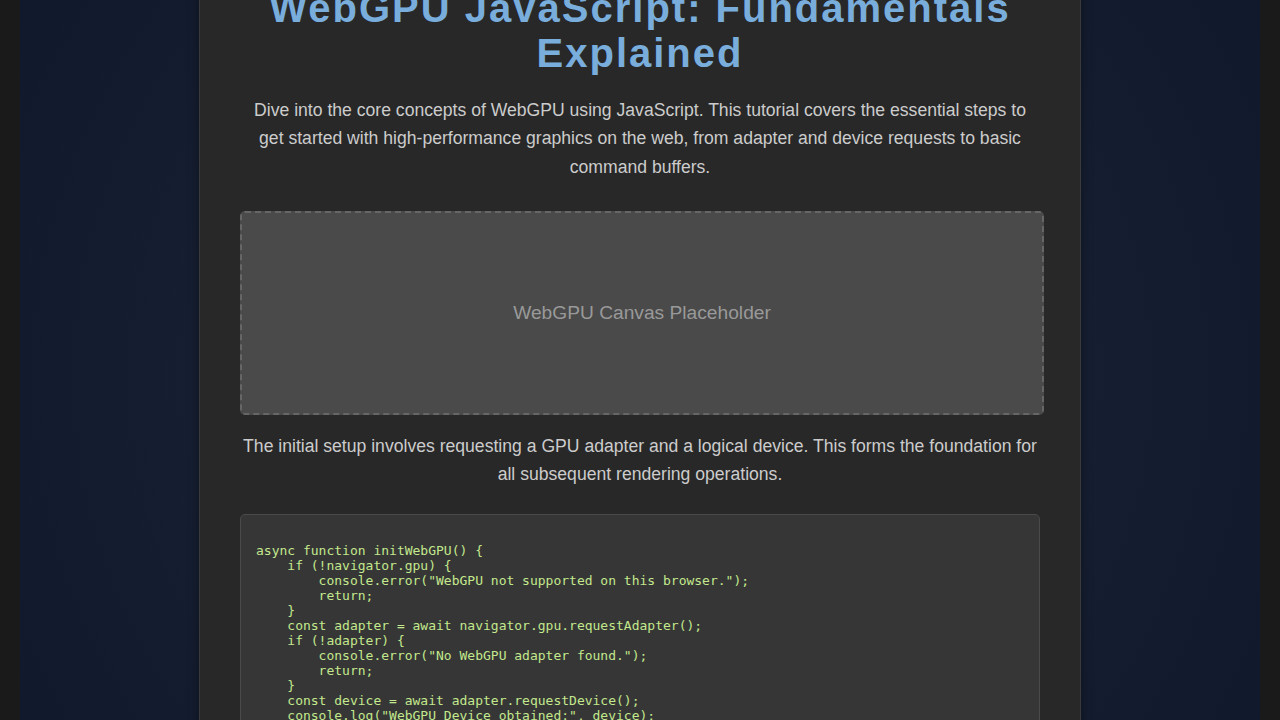

Getting the GPU Adapter and Device

Our first task is to ask the browser for access to the GPU. This process involves requesting an adapter, which represents the physical GPU, and then a device, our primary interface for sending commands to the GPU. Think of the adapter as the driver and the device as your direct line of communication. We use navigator.gpu.requestAdapter() and adapter.requestDevice(). Both are asynchronous operations, so we’ll use await.

async function initWebGPU() {

if (!navigator.gpu) {

console.error("WebGPU not supported on this browser.");

return;

}

const adapter = await navigator.gpu.requestAdapter();

if (!adapter) {

console.error("No WebGPU adapter found.");

return;

}

const device = await adapter.requestDevice();

// ... further setup

}

If you’ve ever worked with APIs like Intersection Observer API: JS Explained with Real Examples, you’ll appreciate the asynchronous nature here, ensuring the browser remains responsive while hardware is initialized. This initial handshake is critical for everything that follows.

Configuring the Canvas Context

With our device in hand, we need to tell our HTML canvas how it will interact with WebGPU. This involves getting its context and configuring it. We specify the device, the format of the textures we’ll be rendering to (typically navigator.gpu.getPreferredCanvasFormat()), and optionally an alphaMode like 'premultiplied' or 'opaque'. This setup ensures that our canvas is ready to receive GPU-rendered output.

const canvas = document.getElementById('webgpu-canvas');

const context = canvas.getContext('webgpu');

context.configure({

device,

format: navigator.gpu.getPreferredCanvasFormat(),

alphaMode: 'premultiplied',

});

This configuration step is vital; it links the browser’s display system with our GPU operations. Without it, the GPU wouldn’t know where to draw.

Crafting Shaders: The Heart of Graphics

Shaders are small programs that run directly on the GPU. They describe how to draw things. We typically have two main types: a vertex shader that transforms geometry (like position), and a fragment shader that determines the color of each pixel. WebGPU uses a language called WGSL (WebGPU Shading Language), similar to GLSL or HLSL. We’ll define simple shaders that pass vertex positions and assign a fixed color.

// Vertex Shader

@vertex

fn vs_main(@builtin(vertex_index) in_vertex_index: u32) -> @builtin(position) vec4f {

let positions = array<vec2f, 3>(

vec2f(0.0, 0.5), vec2f(-0.5, -0.5), vec2f(0.5, -0.5)

);

return vec4f(positions[in_vertex_index], 0.0, 1.0);

}

// Fragment Shader

@fragment

fn fs_main() -> @location(0) vec4f {

return vec4f(1.0, 0.0, 0.0, 1.0); // Red color

}

These shaders are compiled into a GPUShaderModule. Understanding shaders is fundamental to 3D graphics, as they dictate visual appearance.

Building the Render Pipeline

The render pipeline defines the entire process from taking your raw data (vertices) to producing pixels on screen. It specifies the shaders to use, how vertices are interpreted, the output format, and depth/stencil testing. This is a powerful object that encapsulates many graphics state settings.

const pipeline = device.createRenderPipeline({

layout: device.createPipelineLayout({ bindGroupLayouts: [] }),

vertex: {

module: device.createShaderModule({ code: vertexShaderCode }),

entryPoint: 'vs_main',

},

fragment: {

module: device.createShaderModule({ code: fragmentShaderCode }),

entryPoint: 'fs_main',

targets: [{ format: context.getPreferredFormat() }],

},

primitive: {

topology: 'triangle-list',

},

});

This pipeline is a cornerstone. It orchestrates how our GPU will render the scene.

Preparing Vertex Data and Buffers

To draw anything, we need data! For our simple triangle, we’ll define its vertex positions. This data lives in system memory, but the GPU needs its own copy. We create a GPUBuffer on the GPU, upload our vertex data, and specify its usage (e.g., GPUBufferUsage.VERTEX).

const vertices = new Float32Array([

0.0, 0.5, // Top

-0.5, -0.5, // Bottom-left

0.5, -0.5, // Bottom-right

]);

const vertexBuffer = device.createBuffer({

size: vertices.byteLength,

usage: GPUBufferUsage.VERTEX | GPUBufferUsage.COPY_DST,

mappedAtCreation: true,

});

new Float32Array(vertexBuffer.getMappedRange()).set(vertices);

vertexBuffer.unmap();

Managing buffers efficiently is key to high-performance graphics. For more complex data handling, especially when dealing with dynamic inputs like those found in advanced data parsing with an LLM JSON Parsing: JavaScript Tutorial, you’d consider mapping and unmapping strategies carefully.

The Render Pass: Commanding the GPU

Finally, we issue the actual drawing commands. This happens within a render pass. A GPUCommandEncoder creates a GPURenderPassEncoder. We specify what color to clear the canvas with, and then we tell the render pass to use our pipeline, set our vertex buffer, and draw.

const commandEncoder = device.createCommandEncoder();

const textureView = context.getCurrentTexture().createView();

const renderPassEncoder = commandEncoder.beginRenderPass({

colorAttachments: [{

view: textureView,

clearValue: { r: 0.1, g: 0.1, b: 0.2, a: 1.0 }, // Dark blue background

loadOp: 'clear',

storeOp: 'store',

}],

});

renderPassEncoder.setPipeline(pipeline);

renderPassEncoder.setVertexBuffer(0, vertexBuffer);

renderPassEncoder.draw(3); // Draw 3 vertices

renderPassEncoder.end();

device.queue.submit([commandEncoder.finish()]);

This sequence of commands tells the GPU precisely what to do, from clearing the screen to rendering our triangle. Then, device.queue.submit() sends these commands for execution. This cycle typically repeats for every frame of an animation. You can learn more about graphics pipelines on the MDN WebGPU docs.

Making It Responsive

Ensuring our WebGPU creation looks great on any screen size is crucial. We achieve responsiveness by dynamically adjusting canvas dimensions and potentially reconfiguring our WebGPU context. For instance, update the width and height attributes of the canvas whenever the window resizes.

window.addEventListener('resize', () => {

const dpr = window.devicePixelRatio || 1;

canvas.width = canvas.clientWidth * dpr;

canvas.height = canvas.clientHeight * dpr;

context.configure({

device,

format: navigator.gpu.getPreferredCanvasFormat(),

alphaMode: 'premultiplied',

size: [canvas.width, canvas.height],

});

renderFrame();

});

This code illustrates resizing the canvas element based on its clientWidth and clientHeight, often influenced by CSS media queries. Re-calling context.configure with the updated size is essential. This ensures underlying WebGPU texture views match new canvas dimensions. Remember, performance is key, so only re-render when necessary. You could also style the canvas container with CSS media queries. This approach ensures your stunning graphics are accessible and visually appealing across all devices.

Final Output

After all our hard work, what will we see? Our canvas will burst to life with a beautifully rendered, solid-colored triangle. Depending on our fragment shader, this triangle might be a vibrant red against a subtle dark blue background, providing clear visual contrast. The edges will be sharp, and any animation, if implemented, will display smoothly thanks to the GPU’s efficiency. This seemingly simple output is a monumental achievement, demonstrating direct GPU control from your browser. It provides a tiny window into the GPU’s world, ready for more advanced techniques and complex visual effects.

Embracing WebGPU JavaScript for Future Graphics

Congratulations! You’ve successfully navigated the exciting world of WebGPU JavaScript fundamentals. We explored everything from fetching the GPU device to defining shaders and orchestrating render passes. This journey highlights WebGPU’s potential for high-performance graphics and compute on the web.

You can apply these newfound skills to a myriad of projects. Think about creating interactive product configurators, browser-based CAD tools, or contributing to next-gen web game engines. The performance gains and direct hardware access offered by WebGPU are transformative. It’s a powerful tool, poised to redefine what’s possible in a browser.

“WebGPU represents a significant leap forward, bringing low-level GPU control and modern rendering capabilities to the web platform, making it a compelling choice for demanding visual applications.”

As you continue your WebGPU journey, explore advanced topics like compute shaders. The possibilities are truly endless. For deeper dives into web development patterns and accessible design, CSS-Tricks is incredibly helpful. Understanding good design practices, such as ensuring proper color contrast, can elevate your WebGPU projects, similar to how a Color Contrast Tool: HTML, CSS & JS Accessibility Guide assists in building user-friendly interfaces.

WebGPU isn’t just another API; it’s a paradigm shift. Embrace it, experiment with it, and prepare to build groundbreaking experiences. The future of web graphics is here, and you’re now equipped to be a part of it!