Hey there, future data wizard! Ever felt like you’re staring at a treasure map, and the treasure is all that juicy data locked away on websites?

You know, the kind of data you wish you could just grab for your own cool projects. Maybe you need product prices. Perhaps you want to track news headlines. Or maybe you’re building something truly innovative. Sounds familiar, right?

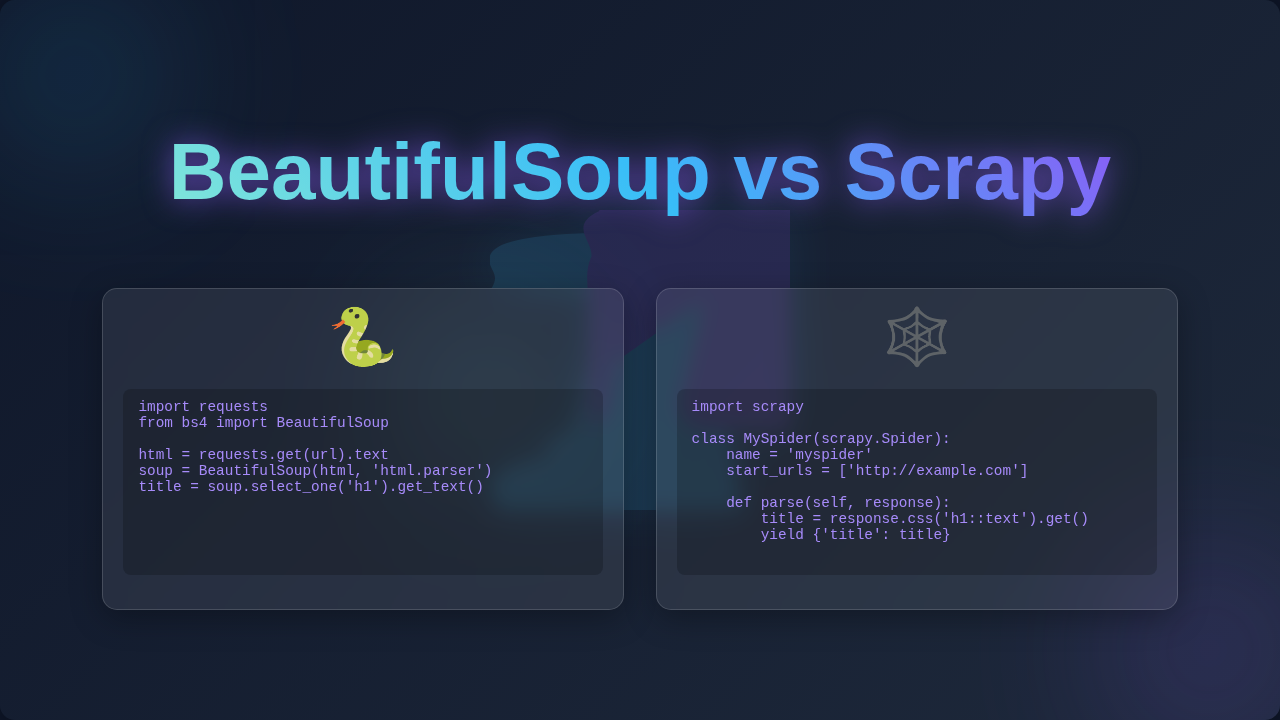

Then you dive into web scraping in Python. Suddenly, you hear about two big names: BeautifulSoup and Scrapy. You start to wonder, which one is right for you? What’s the real difference in BeautifulSoup vs Scrapy?

The Data Quest: Why It Feels So Tricky

Here’s the thing. Websites are not just simple documents anymore. Many are dynamic. This means parts of them load after the initial page view. They often update constantly. Pulling information out of them isn’t always straightforward.

You might start with a basic tool. You send a request to a website. It sends back a big blob of HTML. HTML is the raw code that builds a webpage. But often, that’s just the beginning.

That raw HTML is like a jumbled pile of LEGO bricks. You need to find specific pieces. You want the price tag, not the copyright notice. You’re looking for the product description, not the footer navigation. It feels a bit like trying to find a needle in a haystack, especially when JavaScript is constantly moving things around.

Without the right tool, you’re left sifting through lines and lines of code. It’s time-consuming. It’s frustrating. You might even feel stuck or overwhelmed by the sheer volume of data you need to parse.

Your Lightbulb Moment: Tools for the Job

Don’t worry, you’re not alone in that struggle. Many of us have been there. The good news is, Python has fantastic tools to help you extract what you need.

This is where our two contenders step in. Both BeautifulSoup and Scrapy help you extract data. However, they do it in very different ways. Think of them as different vehicles for your data journey. One is a nimble bicycle, great for quick trips. The other is a powerful cargo truck, built for long hauls.

BeautifulSoup is like a skilled artisan. It’s excellent at dissecting HTML. It helps you navigate the webpage structure. Meanwhile, Scrapy is more like a professional explorer. It not only dissects but also manages the entire expedition. It handles fetching, storing, and organizing your finds on a much larger scale.

BeautifulSoup: Your Friendly HTML Navigator

Let’s talk about BeautifulSoup first. This library is your go-to for parsing HTML and XML documents. Parsing means taking that raw HTML code and turning it into something readable. It builds a tree-like structure. This structure makes it super easy to find specific elements within the page.

Imagine you have a huge novel. BeautifulSoup helps you quickly jump to “Chapter 3, first paragraph, third sentence.” It’s incredibly precise. You can ask it to find all headings. You can tell it to grab all links. It makes sense of the chaos that is raw HTML.

You typically use BeautifulSoup with another library called requests. The requests library simply fetches the webpage content from a URL. Then, BeautifulSoup takes over. It’s like having a dedicated delivery person (requests) bring you a book, and then a meticulous librarian (BeautifulSoup) helping you find the exact information inside that book.

Its strengths are clear. It’s easy to learn. You can get started very quickly. It’s perfect for smaller, one-off scraping tasks. If you just need a few data points from a specific page, it’s a fantastic choice. You can even use it for tasks like validating links, much like a broken link checker Python script might do.

However, it doesn’t handle everything. It won’t automatically navigate to new pages. It doesn’t manage waiting times between requests. It lacks built-in features for dealing with complex website interactions. For bigger, more complex jobs, you need more horsepower.

BeautifulSoup Tip: Think of BeautifulSoup as your expert magnifying glass and compass. It helps you zoom in on specific parts of a webpage’s structure with incredible ease. Ideal for focused, single-page data extraction!

Scrapy: The Professional Web Explorer

Now, let’s look at Scrapy. This is not just a library; it’s a full-fledged web scraping framework. A framework provides a structured way to build applications. It gives you all the tools and rules you need to create complex, robust scrapers.

Scrapy handles everything within its ecosystem. It fetches the pages. It parses the HTML. It manages sessions and cookies. It can even handle things like logging in or respecting rate limits. Rate limits are rules set by websites to prevent too many requests too quickly, which is crucial for ethical scraping.

Imagine building an automated factory for data. Scrapy provides the blueprints, the assembly line, and the quality control from start to finish. It can visit thousands, even millions, of pages. It efficiently manages the entire process. This includes handling concurrency, meaning it can handle many tasks at once, dramatically speeding up your scraping.

Scrapy includes robust features like pipelines. Pipelines are components that process your scraped items after they are extracted. You can clean data, validate it, or save it directly to a database. It also has middleware. Middleware lets you modify requests or responses before they are processed. For example, you might rotate proxies. Proxies are alternate IP addresses. This helps avoid getting blocked by websites and enhances anonymity.

The learning curve for Scrapy is steeper. There’s more to set up initially. You need to understand its architecture and its specific terminology. But once you master it, you unlock immense power. You can build powerful, scalable scrapers. These can run continuously, collecting vast amounts of information. Once you have all that rich data, you might even want to serve it up with a Flask REST API.

Scrapy Tip: Consider Scrapy when you need a robust, scalable solution for extensive data collection. It’s like orchestrating a whole team of data collectors and organizers, perfect for large-scale, continuous projects!

BeautifulSoup vs Scrapy: Which Tool Should You Pick?

So, how do you decide between BeautifulSoup vs Scrapy? It really comes down to your project’s needs. Think about the scale. Consider the complexity. And evaluate your available time commitment. Neither is inherently “better”; they simply serve different purposes.

1. Project Scope and Scale

- BeautifulSoup: Best for small, targeted extractions. Need a few prices from a single page? Or maybe some headlines from one blog? BeautifulSoup is your quick, efficient helper for focused tasks.

- Scrapy: Ideal for large-scale crawling and continuous data collection. Building a massive dataset across an entire website? Or managing ongoing data collection from multiple sources over time? Scrapy is purpose-built for these kinds of marathons.

2. Learning Curve and Setup

- BeautifulSoup: Very low learning curve. You can write your first functional scraper in minutes with just a few lines of Python. It feels very immediate.

- Scrapy: Higher learning curve. It has a specific project structure you need to follow. You write ‘spiders’ within that structure. It requires understanding more concepts like pipelines, middleware, and request scheduling.

3. Features and Control

- BeautifulSoup: Primarily a parsing library. You handle the fetching, error handling, session management, and other logistical details yourself. It gives you fine-grained control over just the parsing part.

- Scrapy: A complete ecosystem. It handles fetching, parsing, data storage, error handling, retries, concurrency, and much more, all within its framework. It offers powerful, built-in solutions for complex scenarios.

Think of it this way: are you taking a rowboat out for a quick fishing trip on a small pond? That’s BeautifulSoup. Or are you launching a cargo ship to haul a massive amount of goods across an ocean, requiring a crew and sophisticated navigation? That’s Scrapy. Both are incredibly useful. They just serve fundamentally different purposes.

BeautifulSoup vs Scrapy: Practical Use Cases

Let’s make this concrete. When would you definitely reach for one over the other in real-world scenarios?

When to use BeautifulSoup:

- A Quick Price Check: You want to grab the current price of a specific gadget from a single e-commerce page, just once, for personal reference.

- Blog Post Titles: You need a simple list of the latest blog post titles and their authors from procoder09.com.

- Simple Data Extraction: Getting a table of public information from a static HTML page, like local election results or historical data.

- Prototyping: You’re testing an idea quickly. You want to see if specific data exists and is accessible before committing to a larger, more complex scraping project.

- HTML Cleaning: You have an HTML file and need to extract specific text or clean up tags, without necessarily fetching from the web.

When to use Scrapy:

- E-commerce Aggregator: Building a robust tool to compare prices and specifications from hundreds of online stores daily, tracking changes over time.

- Research Dataset: Collecting millions of product reviews, academic papers, or news articles from multiple, large domains for comprehensive analysis.

- Content Monitoring: Tracking changes on thousands of news articles or social media feeds over an extended period.

- Complex Interactions: Your scraping requires handling user logins, navigating multi-page forms, dealing with CAPTCHAs, or constantly rotating IP addresses through proxies for ethical and reliable large-scale data collection.

- API Building: You’re building a scraping service that needs to consistently fetch, process, and store vast amounts of data, potentially exposing it via an internal API.

What to Do Next: Your Scraping Journey

So, what’s your best next step? For most beginners, I highly recommend starting with BeautifulSoup. It’s less intimidating. It teaches you the fundamentals of HTML parsing and selectors without the overhead of a full framework. You’ll quickly see results. This builds confidence and a strong foundation.

Get comfortable with it. Practice selecting different elements. Understand how a webpage’s underlying structure works. Experiment with different types of websites. Mastering core Python skills, like understanding Python functions, will make your learning journey much smoother.

Then, once your projects grow more ambitious, or you hit the limits of what BeautifulSoup can easily do on its own, explore Scrapy. You’ll have a solid foundation in parsing. This makes the transition to a more powerful framework much smoother. You’ll appreciate Scrapy’s advanced features and scalability even more because you’ll understand the problems it solves.

Wrapping Up Your Data Adventure

Ultimately, there’s no single “best” tool in the BeautifulSoup vs Scrapy debate. Both are incredibly powerful Python libraries. They just excel in different scenarios. Your choice depends entirely on your specific goal, the scale of your project, and your comfort level with learning new tools.

Start small. Learn the basics of HTML parsing. Then scale up as your needs evolve. The most important thing is to just start. You’ve got this!